Deep Packet Inspection

Modern enterprises face a constant barrage of network-based threats — from commodity malware to sophisticated nation-state intrusions. Traditional firewalls and intrusion detection systems, once the backbone of perimeter defense, often lack the granularity to deal with modern, encrypted, and evasive traffic. Enter Deep Packet Inspection (DPI) — a powerful technology that inspects not just packet headers, but the payloads themselves, to identify, classify, and sometimes block network traffic.

What is Deep Packet Inspection?

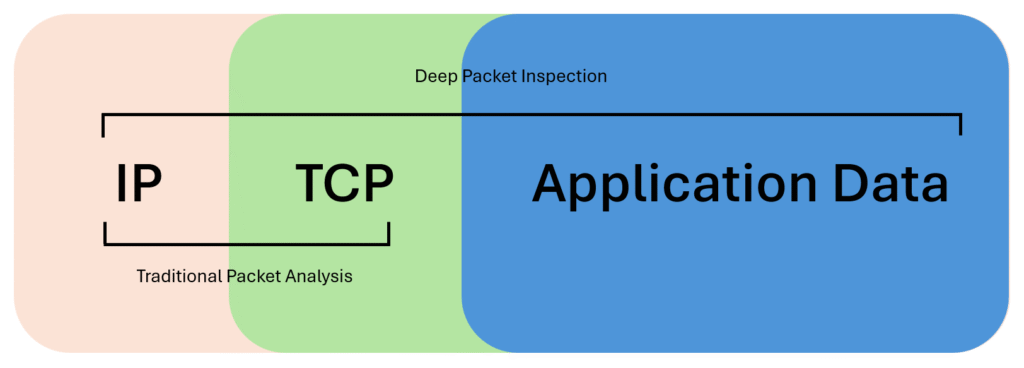

At its core, DPI is a method of examining network traffic beyond the OSI layer 3 and 4 headers (IP and TCP/UDP). Unlike basic packet filtering that only checks source/destination addresses or ports, DPI analyzes the full contents of the packet, including application data. This allows security devices to detect:

- Malware signatures

- Command-and-control traffic

- Policy violations (e.g., peer-to-peer traffic, data exfiltration attempts)

- Encrypted traffic anomalies (via TLS inspection or metadata analysis)

The level of insight DPI provides is unparalleled compared to traditional packet filtering, making it a vital tool in modern network defense.

How DPI Works

A DPI engine typically performs several sequential operations:

- Packet capture – Intercepts traffic at the gateway or inline appliance.

- Reassembly – Collects fragmented packets and reconstructs sessions.

- Pattern matching / heuristic analysis – Compares payload data against known malware signatures, regex rules, or protocol decoders.

- Policy enforcement – Allows, blocks, or flags traffic depending on predefined rules and the outcome of analysis.

- Logging and reporting – Stores metadata for forensic analysis, threat hunting, or compliance audits.

Modern DPI solutions often incorporate machine learning to detect zero-day exploits and unusual behavioral patterns that may not match a known signature.

Use Cases for DPI

- Intrusion Prevention Systems (IPS): DPI is the engine that enables IPS to detect malicious payloads.

- Next-Generation Firewalls (NGFW): DPI powers application awareness, letting administrators distinguish between Slack, Zoom, or Tor traffic even if all run over port 443.

- Data Loss Prevention (DLP): DPI helps detect sensitive data (credit cards, PHI, PII) leaving the network.

- Traffic Shaping and QoS: Service providers use DPI to prioritize certain applications and manage bandwidth.

Security and Privacy Trade-offs

While DPI is an invaluable cybersecurity tool, it’s not without controversy:

- Performance impact: DPI is computationally heavy. High throughput environments often need dedicated hardware acceleration (ASICs, FPGAs) or cloud offloading.

- Privacy concerns: DPI can reveal the exact content of user communications, raising ethical and regulatory questions (especially under GDPR or Australia’s Privacy Act).

- Encryption blind spots: With over 80% of traffic now encrypted, DPI increasingly relies on TLS interception (man-in-the-middle proxying), which adds latency and creates key management challenges.

- False positives: Aggressive policies can disrupt business-critical apps if tuning isn’t precise.

The Role of AI in Deep Packet Analysis

The explosion of encrypted traffic, polymorphic malware, and stealthy adversarial techniques has pushed DPI beyond the limits of signature-based detection. Artificial Intelligence (AI) and Machine Learning (ML) are stepping in to fill the gap.

How AI Enhances DPI

- Anomaly Detection: AI models can establish baselines of “normal” network behavior and flag deviations (e.g., data exfiltration at odd hours, unusual TLS handshakes).

- Zero-Day Threat Recognition: Instead of relying on known signatures, ML classifiers can identify malicious patterns in traffic that indicate novel exploits.

- Adaptive Rule Tuning: AI can reduce false positives by continuously learning from feedback, automatically adjusting DPI policies without constant human intervention.

- Encrypted Traffic Insights: Even without decrypting payloads, AI can analyze flow metadata (packet sizes, timing, entropy) to detect covert channels.

- Threat Correlation: AI-enhanced DPI can correlate anomalies with other telemetry (endpoint logs, SIEM alerts) to provide higher-confidence detections.

Benefits of AI in DPI

- Faster detection of emerging threats.

- Reduced analyst fatigue from false positives.

- Greater efficiency in high-throughput networks.

- Ability to scale DPI to cloud and distributed environments.

Challenges

- Training data quality: Biased or incomplete datasets can reduce detection accuracy.

- Adversarial evasion: Attackers are developing techniques to fool ML models.

- Explainability: Security teams need transparent AI decisions, especially for compliance and forensics.

Best Practices for Deploying DPI

- Define clear objectives – Whether your goal is malware detection, compliance, or QoS, scope determines your DPI configuration.

- Minimize privacy intrusion – Only inspect what you need. Avoid blanket interception of sensitive services (e.g., banking, healthcare).

- Use selective TLS inspection – Implement whitelisting/blacklisting for encrypted traffic inspection.

- Scale with hardware acceleration – For high-bandwidth enterprises, offload DPI tasks to specialized appliances or distributed architectures.

- Regular tuning and updates – DPI rulesets must evolve with new threats. Automate signature updates and review false positives regularly.

- Adopt AI-driven augmentation – Use AI as a complement, not a replacement, to human judgment in network defense.

The Bottom Line

Deep Packet Inspection remains one of the sharpest tools in a network engineer’s arsenal. But with the rise of encryption and sophisticated adversaries, AI-driven DPI is the new frontier. By combining the granular visibility of DPI with the adaptability of AI, defenders can better detect stealthy threats, reduce noise, and stay one step ahead in the ever-changing cybersecurity landscape.